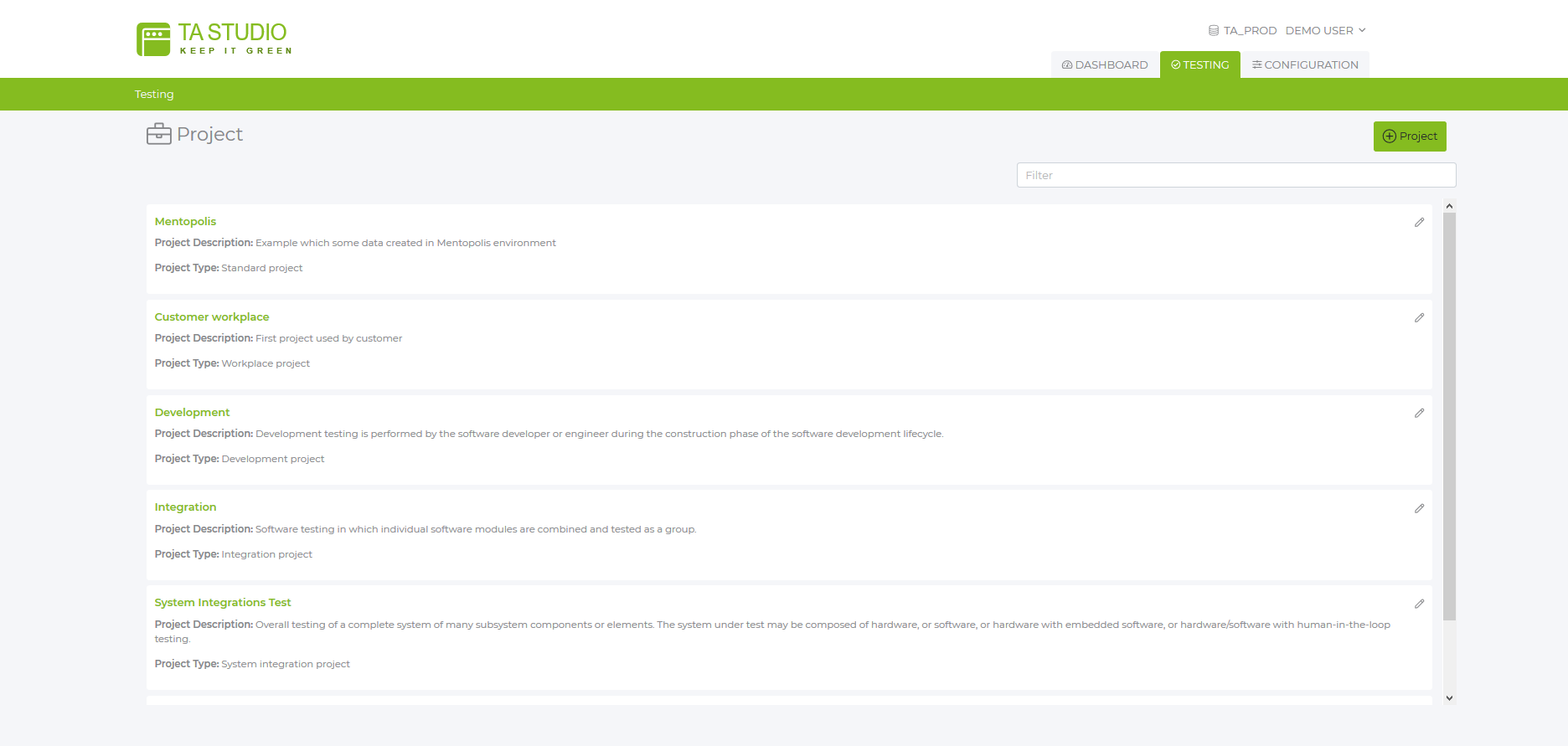

The KIG repository is the backbone of TA Studio. It is based on a relational database in which the relevant information from test parameters and test results to the hardware and software information of all test clients (SUTs) is managed. The KIG repository provides data for tests and is the basis for reporting, statistics, and analysis in the area of evaluation.

Repository Database

All information managed and/or generated by TA Studio is stored in a commercially available relational database, ensuring reliable and versatile data storage.

Test definitions

The repository contains all the information about your test cases in a hierarchical structure.

Each change to the test definition is logged. This means that the underlying test definitions for all test runs are stored in a traceable manner.

The object to be tested. In workplace administration, this is an "application version" that is to be used at the workstations. This makes it possible to assign an "application" from the application databases. On the other hand, a test subject can also embody a cross-application business process. The test subject can, however, be any software unit to be tested. Define it according to your requirements.

Each test subject can be divided into several modules. This is especially useful for larger test subjects such as large applications or application suites (e.g. Office 365 should be divided into Word, Excel, etc. modules), or for structuring large business processes. A single test module can be sufficient for small test subjects. A single parameter set can be provided for each test module (see next section).

Any number of test cases can be defined for each module. Each test case corresponds to a script in eggplant (you can use one script for multiple test cases). A test case can consist of a single atomic test step (as used in eggplant's DAI module) or a complete workflow with many test steps, as needed.

Any number of parameter sets can be defined for each test case. These parameters are the values of input fields or list selection options, etc., as well as the expected result values needed to perform a test and verify the results. During a test run, each test case is executed one parameter set at a time as defined in the test scenario (see below). Configuring multiple test sets with the same parameters but different values allows you to use one script to run with different data constellations.

In contrast to the points described above, the test scenario is created by the TAS analyst. A test scenario defines a test run. The analyst adds or removes the modules to be tested with their test cases from the scenario and selects the corresponding parameter sets for the corresponding test cases. When executed, all test cases added to the scenario are executed one after the other. Schedules can be stored for each scenario to control the execution of the tests in the Eggplant Manager.

One of the main advantages of TA Studio is that it allows the use of parameterized test scripts in a simple way. The parameters are entered and managed using the forms provided by TA Studio. The scripts access these parameters using methods provided in the Sensetalk EEP library included in the TA Studio suite.

While the parameter sets are created by the TAS Engineer, they can be modified or extended by the TAS Analyst, e.g. to add or change input parameters for a created test case, since no scripting knowledge is required.

Global parameters that only depend on the test environment (e.g. file paths, TA database name, etc.) only need to be configured once.

Further parameters can be configured at module level. These are only the module-dependent parameters (access paths, labels, etc.) and are the same for all test cases of this module. This is a very powerful option, e.g. the use of "XPATH parameters" for selenium white box tests, which greatly reduces the maintenance effort for the test scripts.

As described above, the test case parameters for each test case are unique and essentially contain the input and output data to use/expect when performing the test. The ability to create multiple parameter sets for each test case makes it possible to run the same script with different data constellations. In this way, test coverage can be significantly increased with minimal effort.

In order to be able to establish the assignment between tests performed and system information of the SUT (system under test), relevant hardware and software information on a test machine (SUT) is obtained in TA Studio. This data allows a dedicated release of terminal devices with specified hardware and software configuration. The test results and their associated SUT data are available for auditing.